Bibcode

Riesgo, Francisco García; Gómez, Sergio Luis Suárez; Rodríguez, Jesús Daniel Santos; Gutiérrez, Carlos González; Martín Hernando, Yolanda; Montoya Martínez, Luz María; Asensio Ramos, Andrés; Collados Vera, Manuel; Núñez Cagigal, Miguel; Juez, Francisco Javier De Cos

Referencia bibliográfica

Optics and Lasers in Engineering

Fecha de publicación:

11

2022

Número de citas

6

Número de citas referidas

6

Descripción

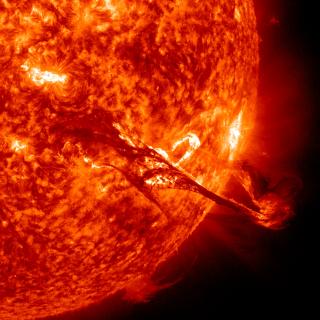

The correction of the phase variations induced by the atmospheric turbulence is one of the most complex problems that an Adaptive Optics (AO) System must deal with, as it must calculate the properties of all the atmosphere traversed by the light from several measures taken by ground-based telescopes. Traditional reconstructors systems used in AO are based on computational algorithms where its reconstruction quality improves with the number of measures made by the telescopes' sensors. That means that sensors are getting greater and greater with their corresponding higher financial expense. Artificial Intelligence (IA) has become in recent years a real alternative to traditional computational methods as reconstructors for AO systems. Fully-convolutional neural networks (FCNs) specifically have shown great performances working in Solar AO, demonstrating their ability to obtain a lot of valuable information from the recorded images for the wavefront phase evaluation. Along this research, the influence of the properties of the telescope's sensors and of the observations in the reconstructions made by the FCNs' is measured, to obtain the configuration that best suits the performance of artificial neural networks (ANN). The presented results determine the way forward for the future sensors for telescopes with reconstruction systems based on ANNs, to obtain higher quality reconstructions employing fewer economic resources.

Proyectos relacionados

Magnestismo Solar y Estelar

Los campos magnéticos son uno de los ingredientes fundamentales en la formación de estrellas y su evolución. En el nacimiento de una estrella, los campos magnéticos llegan a frenar su rotación durante el colapso de la nube molecular, y en el fin de la vida de una estrella, el magnetismo puede ser clave en la forma en la que se pierden las capas

Carlos Cristo

Quintero Noda